Abstract

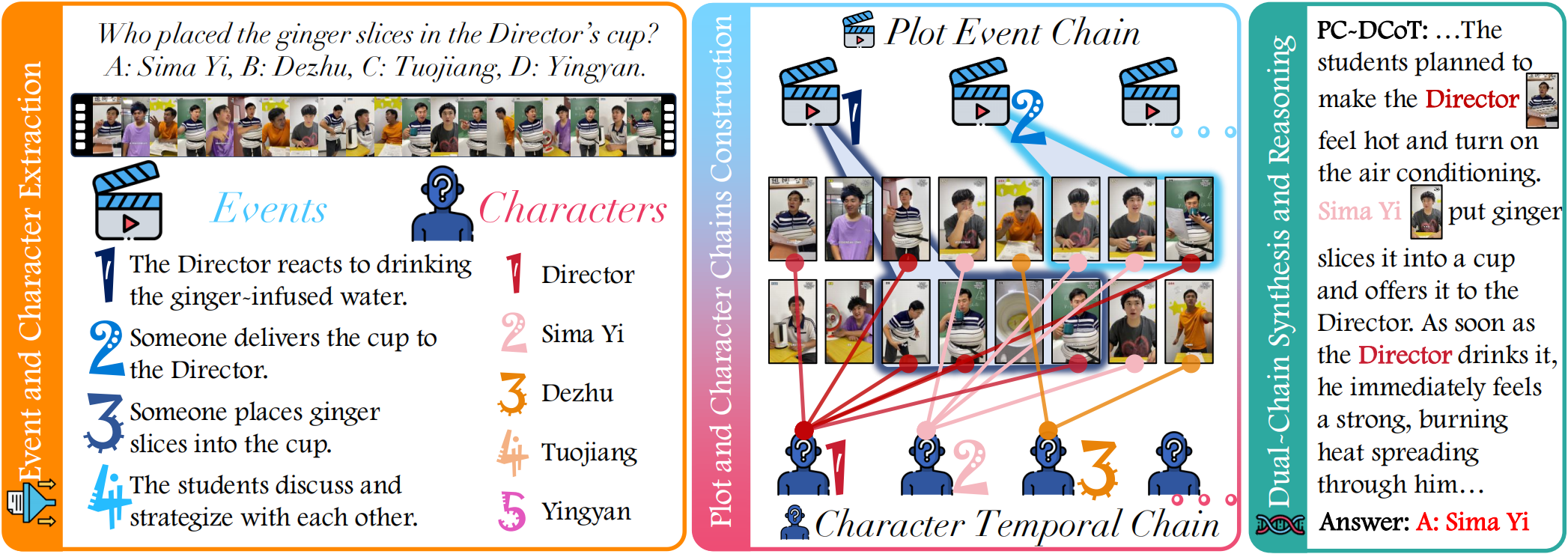

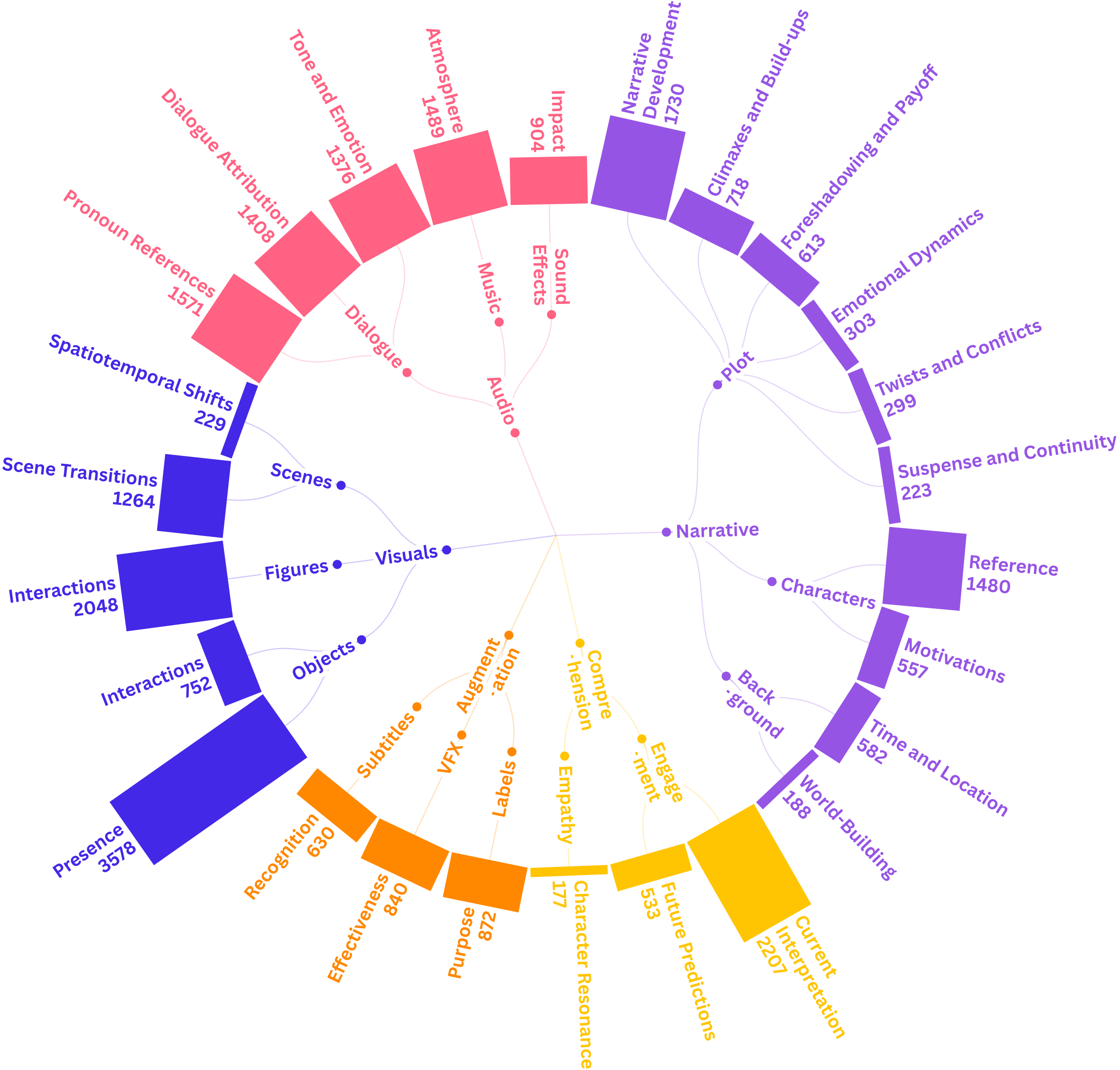

Overview of PC-DCoT framework: Event and character chains are constructed separately from the input, then merged to enable question answering via dual-chain reasoning.

Overview of PC-DCoT framework: Event and character chains are constructed separately from the input, then merged to enable question answering via dual-chain reasoning.

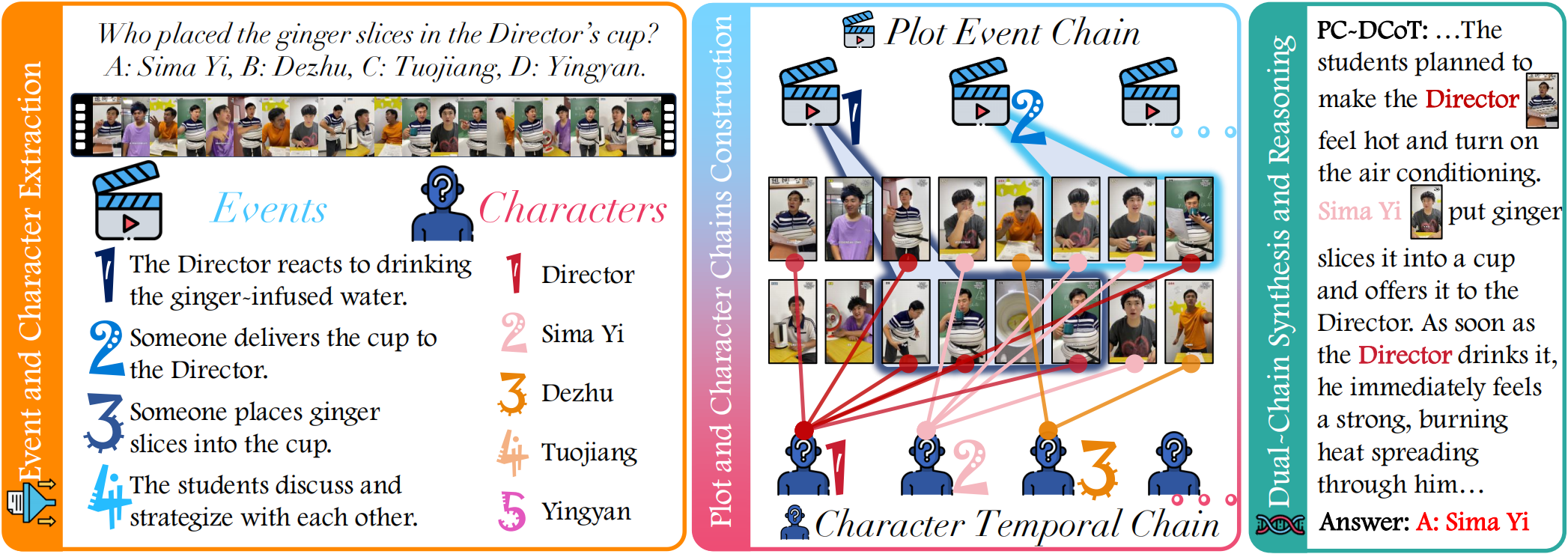

Composition and task distribution of SeriesBench: (Left) The dataset includes two main video categories—series videos and thematic videos—spanning 11 common themes such as Urban Life, Romance, Fantasy, and Food. Each series is annotated with its total number of videos. (Right) Task distribution is shown with detailed sample counts for each task defined in SeriesBench.

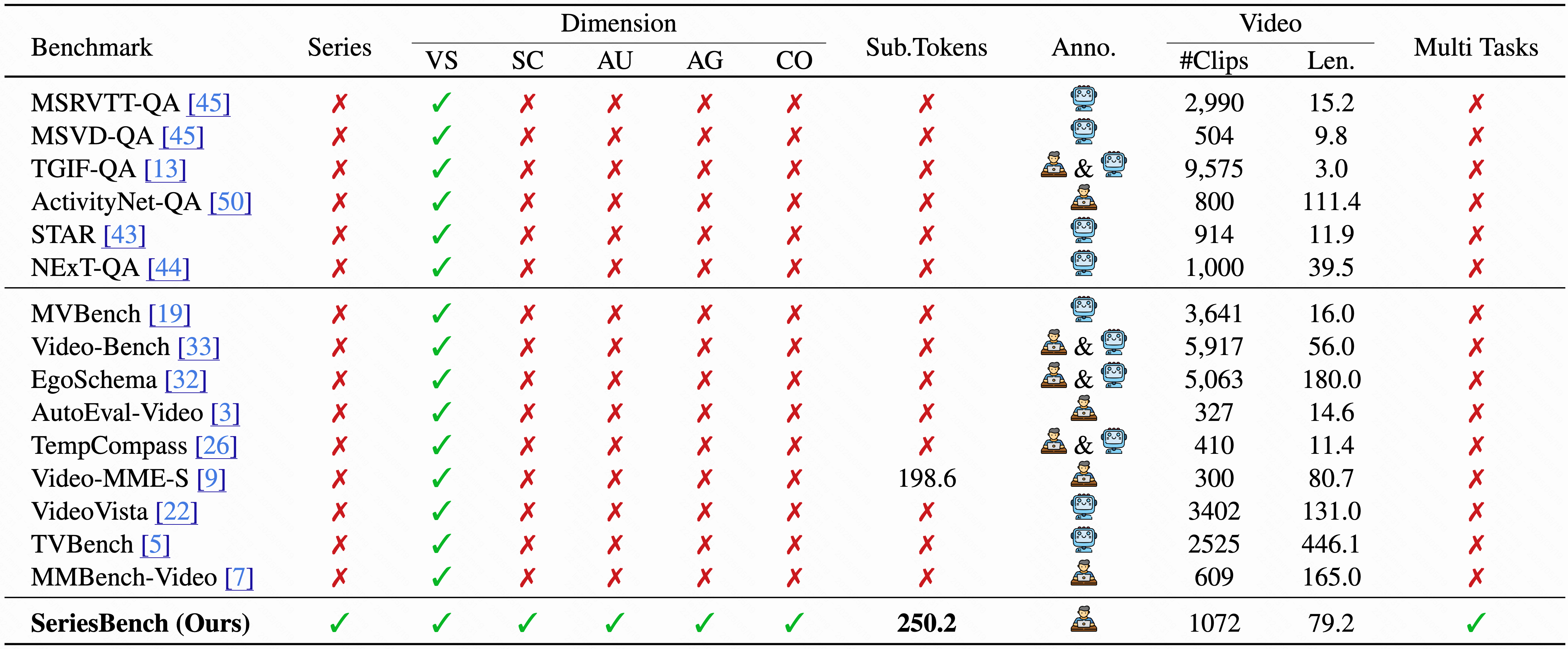

Comparison between SeriesBench and existing benchmarks. SeriesBench uniquely supports narrative-driven series understanding. The table summarizes differences in modality, annotation type, clip count, video length, subtitle density, and task diversity.

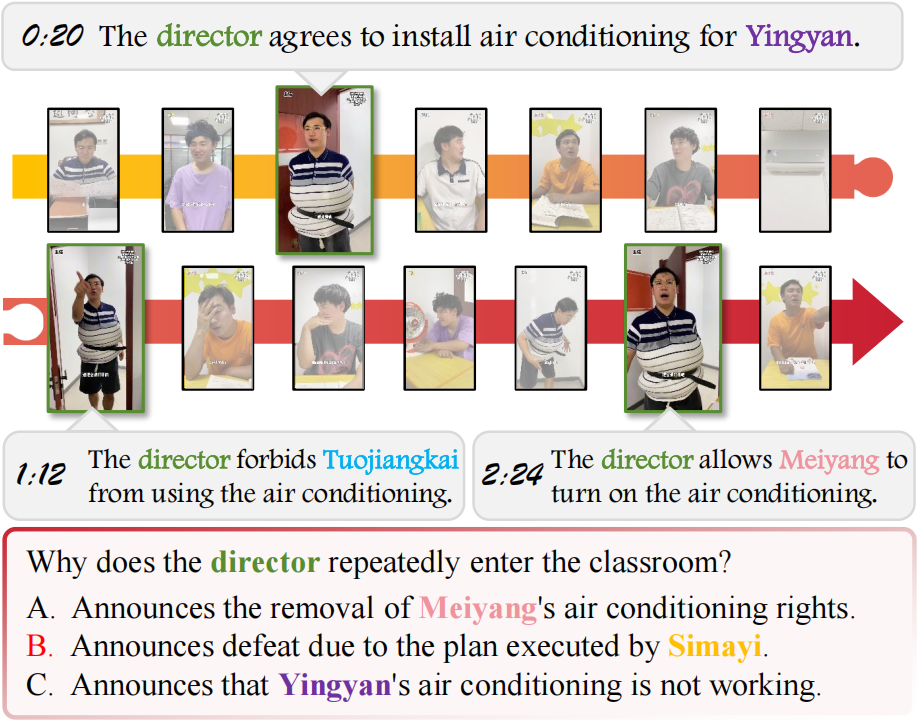

An example from SeriesBench: The example illustrates a task that involves multiple events and characters across a long temporal span within a single video.

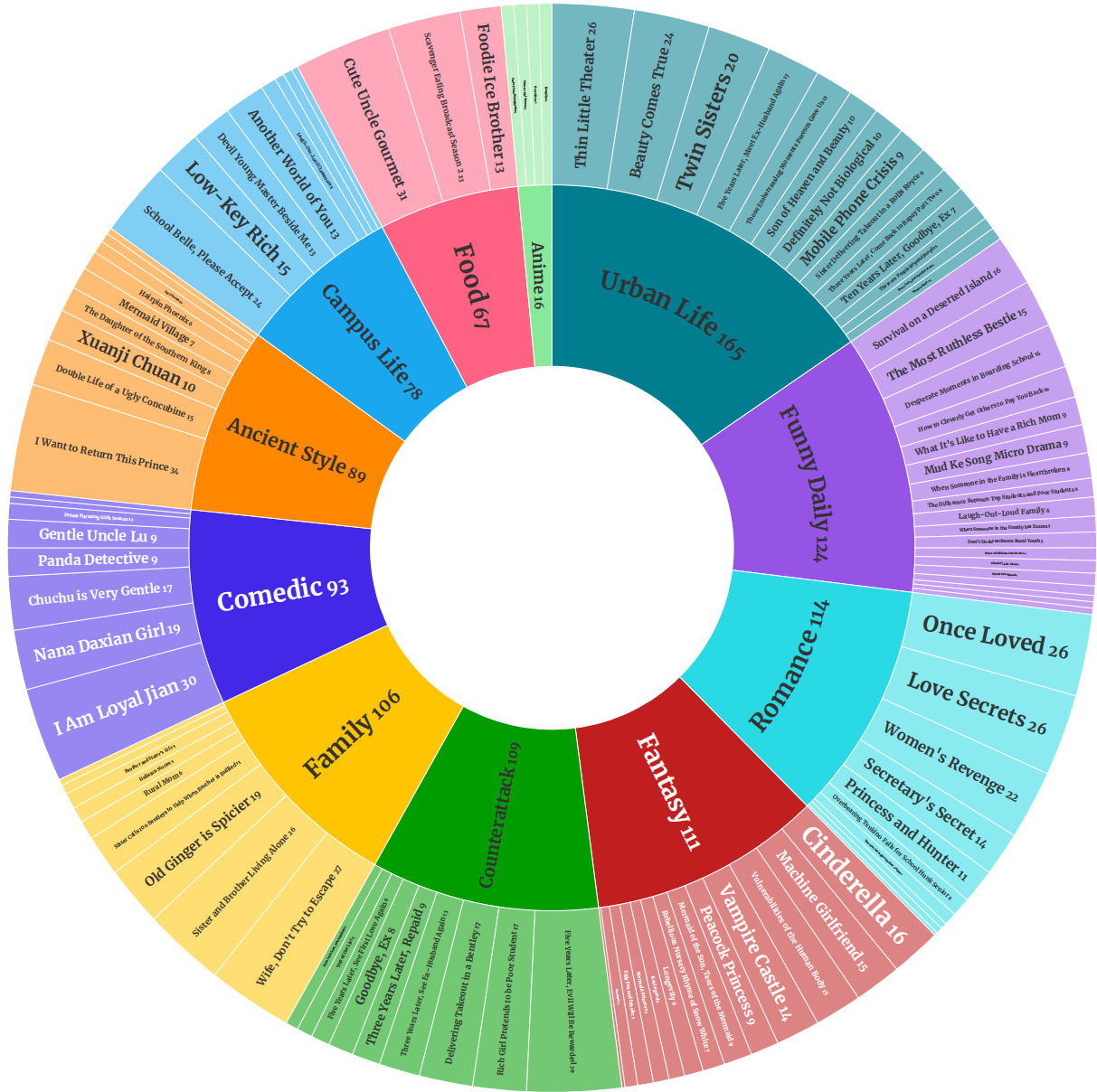

Task diversity in SeriesBench: The table presents a hierarchy of task types defined based on key elements of modern narrative videos. Representative examples are provided for each task to illustrate their characteristics.

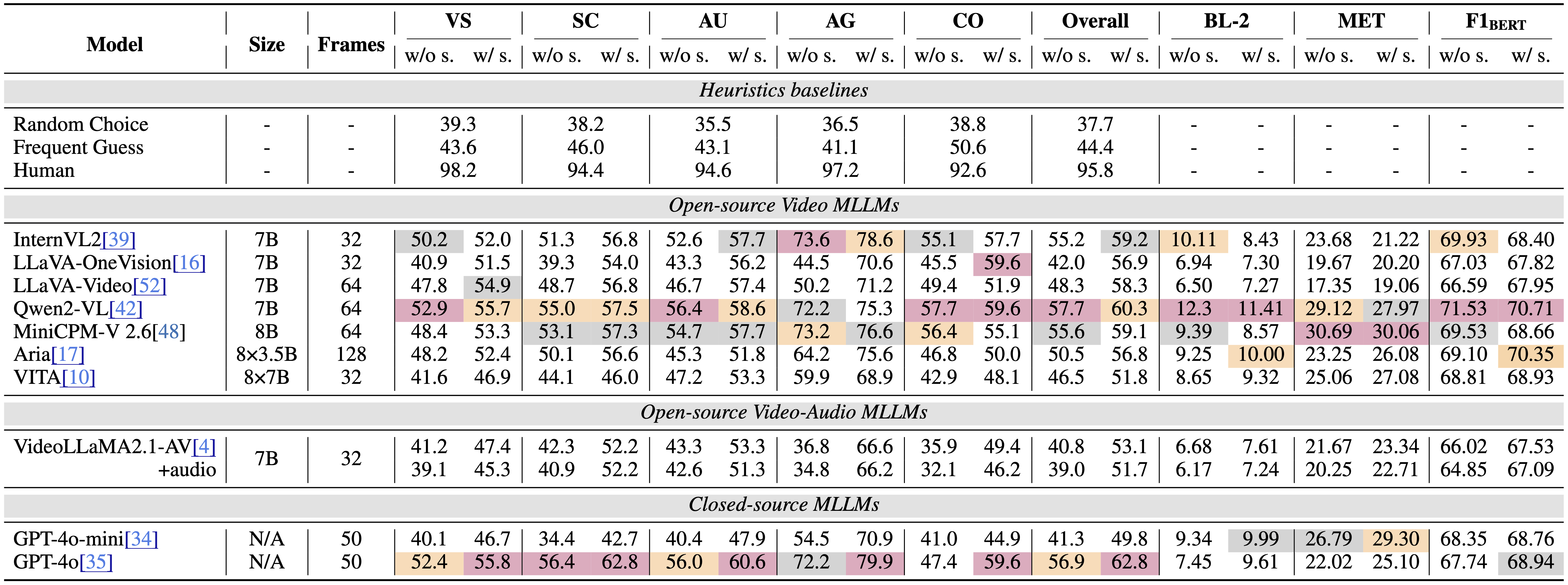

Performance of MLLMs on SeriesBench: Accuracy and open-ended metrics (BL-2, MET, F1BERT) are reported in %, with † denoting PC-DCoT inference. Non-applicable results are marked “–”; top three scores are colored purple, orange, and gray.

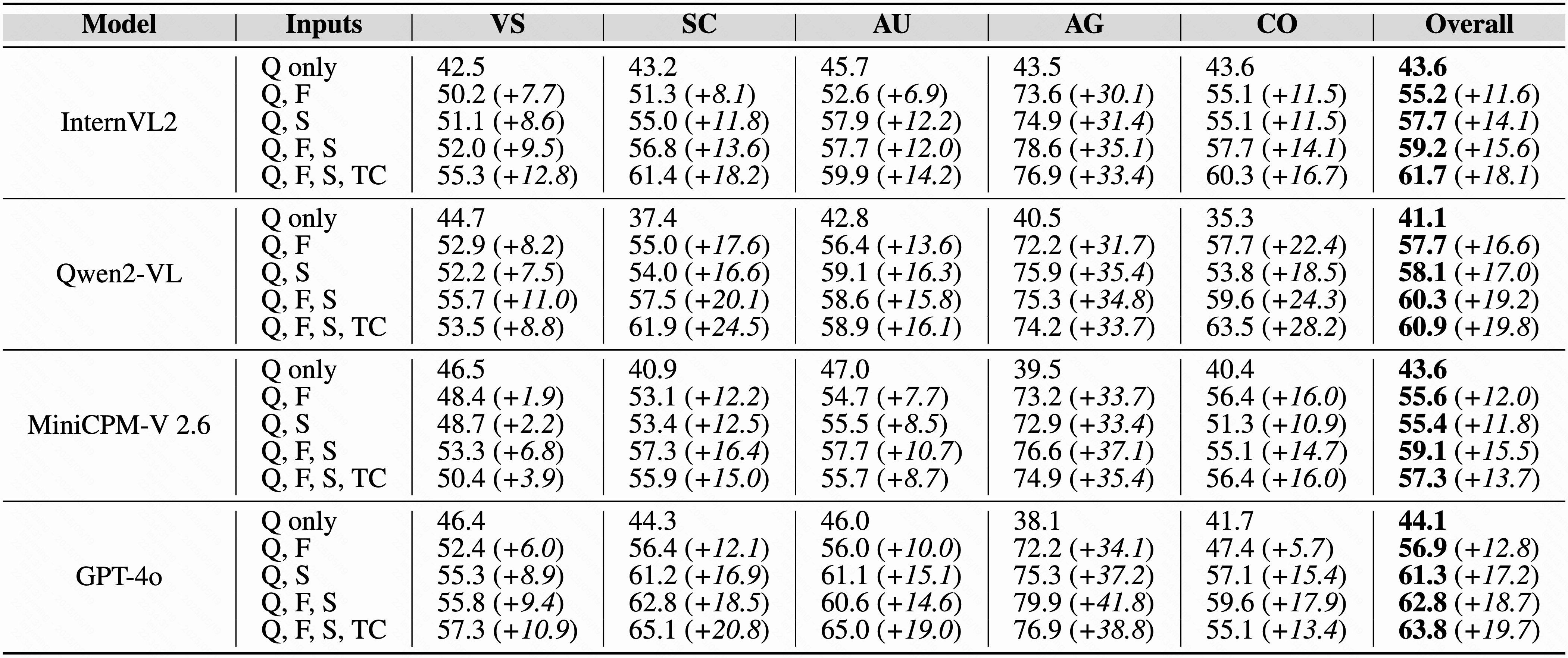

Performance of 4 Top-Performing MLLMs with Different Input Modalities.: (a) Question text (Q) only, (b) Q with Frames (F), (c) Q with Subtitles (S), (d) Q with F and S, (e) Q with F, S, and Thematic-Character information (TC).

More qualitative results with PC-DCoT: The use of the PC-DCoT framework enhances MLLM performance in complex cases. Correct predictions are highlighted in green, while incorrect ones are shown in red.

Additional open-ended response examples generated with PC-DCoT: MLLMs exhibit improved ability to analyze events, character relationships, and individual roles, reflecting a deeper understanding of narrative-driven series.

@misc{seriesbench2025,

title={SeriesBench: A Benchmark for Narrative-Driven Drama Series Understanding},

author={Chenkai Zhang and Yiming Lei and Zeming Liu and Haitao Leng and Shaoguo Liu and Tingting Gao and Qingjie Liu and Yunhong Wang},

year={2025},

eprint={2504.21435},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2504.21435},

}